First-class Agents

Agents as a programming primitive

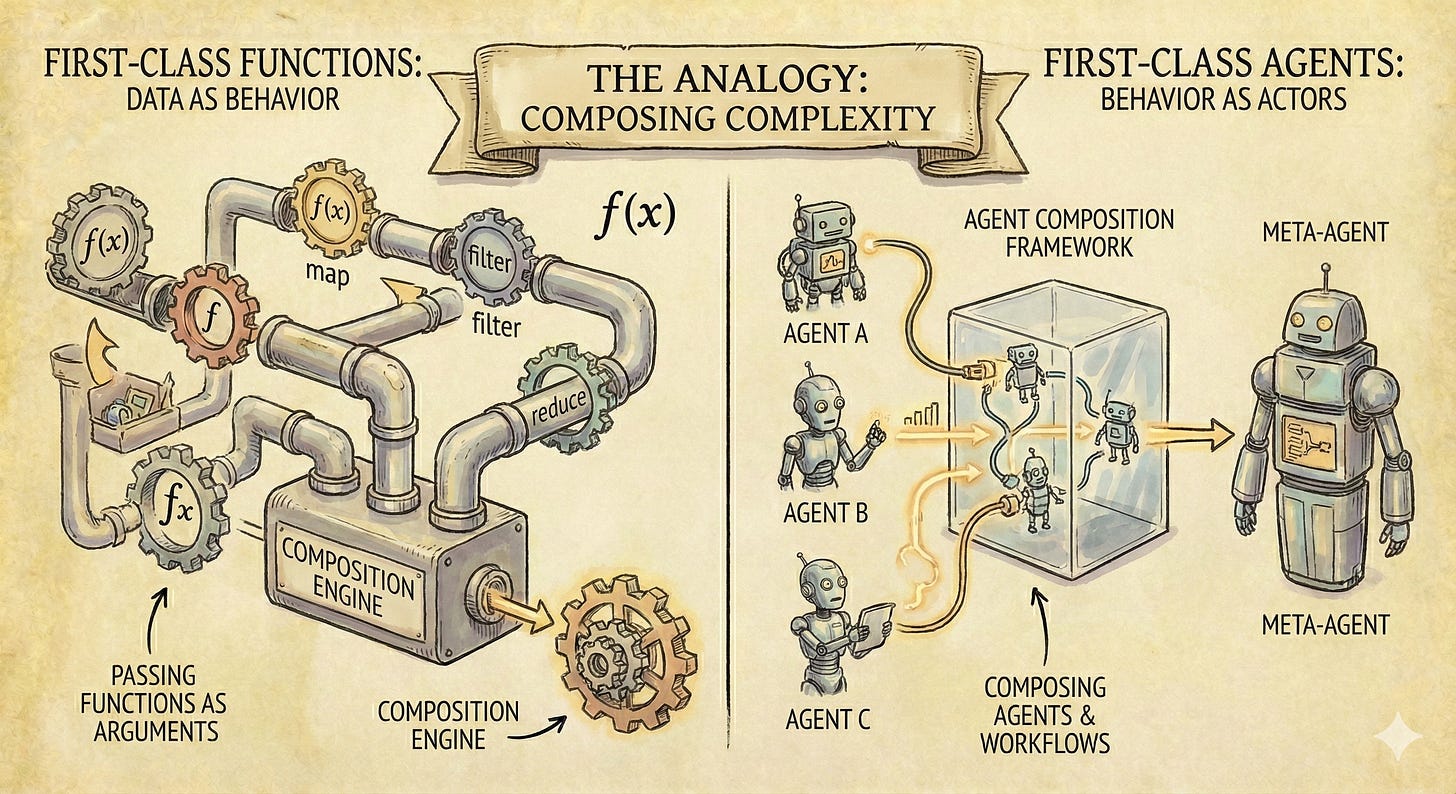

If you’ve written code in a spread of programming languages, you know the difference is deeper than syntax. Some language features make a category of design thinkable that was previously invisible.

First-class functions may be the canonical example. Without them, we see behaviour as fixed at compile time. With them, behaviour is data — composable, passable, dynamic. This is far beyond aesthetic preference and syntactic convenience. Entire paradigms (functional programming, event-driven architecture, callbacks, closures) were popularised because someone had the foresight to make functions first-class in Javascript1.

const add = (a: number, b: number) => a + b;

const double = (x: number) => x * 2;

// higher-order function: takes functions, returns a function

const compose = <A, B, C>(f: (b: B) => C, g: (a: A) => B) =>

(a: A): C => f(g(a));

const doubleAndAdd5 = compose((x: number) => add(x, 5), double);

doubleAndAdd5(3); // 11

// partial application: fix some args now, supply the rest later

const addTo = (base: number) => (x: number) => add(base, x);

const add5 = addTo(5);

add5(10); // 15Agents are at the same inflection point. Most agent systems today treat agents as second-class citizens. You configure them from outside: write a prompt, assign tools, specify a role. The agent runs within those parameters. If you want a different agent, you stop everything and configure a new one. From outside.

How would “first-class agents” work? Agent definitions could become introspectable and manipulable from inside the system at runtime: readable, writable and composable by agents themselves. With a sprinkle of Lisp-style thinking, agents could even modify themselves while the system runs.

This isn’t really “self-modifying AI” in the Skynet sense. It’s a programming primitive. The same way first-class functions let you pass behaviour around, first class agents let you compose, fork, and restructure agent networks dynamically. Think of it like this:

// concrete agent — no context needed, ready to call

const summarizer: Agent = (text) =>

llm(`Summarize concisely:\n\n${text}`);

// abstract agent — generic over its guidelines, like addTo is generic over its base

type ReviewContext = { rules: string; severity: "strict" | "lenient" };

const reviewer: Agent<ReviewContext> = (ctx) => (input) =>

llm(`Review (${ctx.severity}):\nRules: ${ctx.rules}\n\n${input}`);

// partial application: supply context → get a concrete Agent

// reviewer(ctx) ≡ addTo(5)

const codeReviewer = reviewer({ rules: "Type safety, error handling", severity: "strict" });

const proseReviewer = reviewer({ rules: "Clarity, tone, brevity", severity: "lenient" });

const a11yReviewer = reviewer({ rules: "WCAG 2.1 AA compliance", severity: "strict" });

// agents compose like functions — because they are functions

const agentPipe = (...agents: Agent[]): Agent =>

async (input) => {

let result = input;

for (const a of agents) result = await a(result);

return result;

};

const fullReview = agentPipe(codeReviewer, a11yReviewer, summarizer);What It Looks Like in Practice

You can implement this many ways2, with many tradeoffs around iteration speed, security, performance and auditibility.

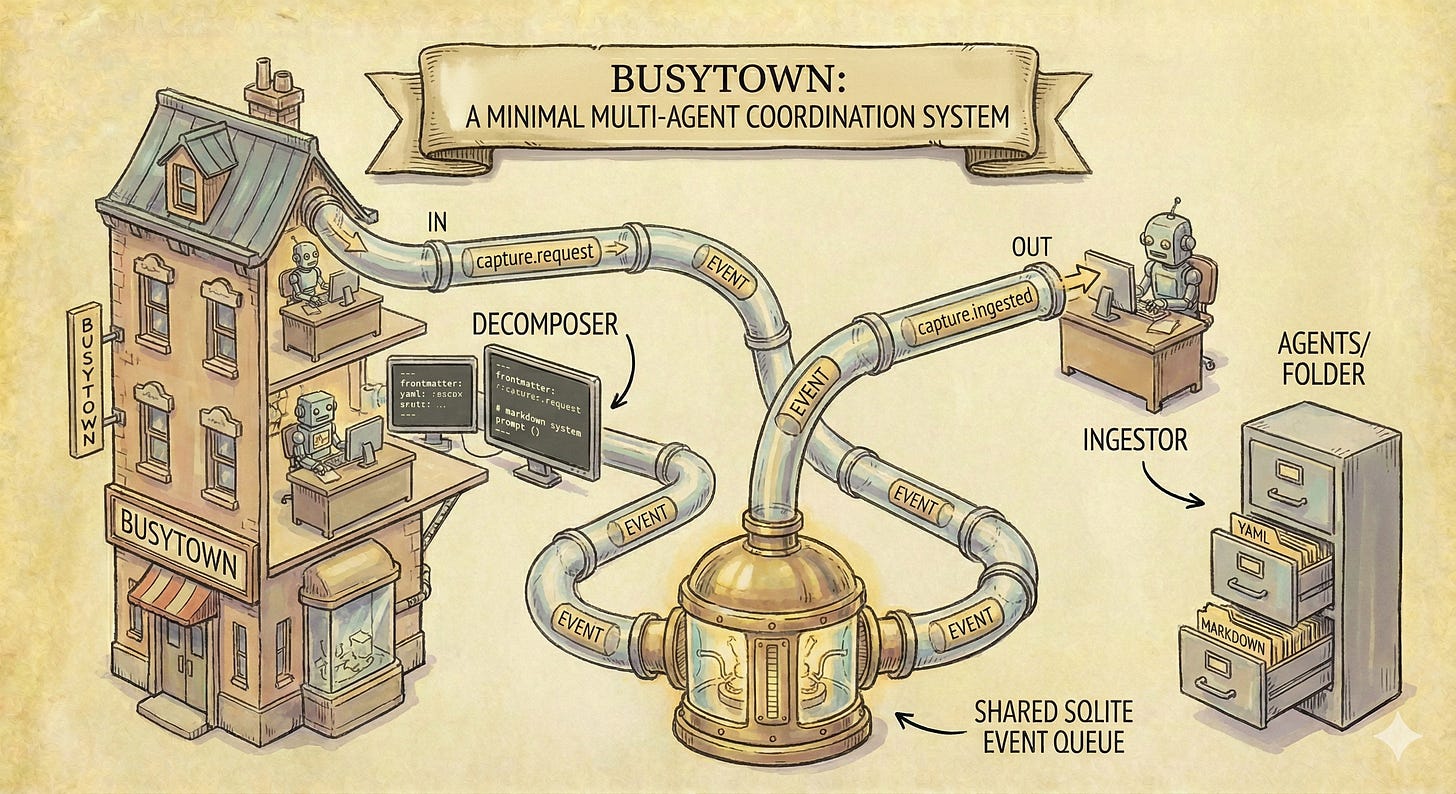

The simplest incarnation I’ve tried is almost comically minimal.

Busytown is a multi-agent coordination system built around a shared SQLite event queue, designed for iteration-in-place. Each agent is a markdown file with YAML frontmatter sitting in an agents/ folder:

---

description: Decomposes captured text into atomic notes

listen:

- "capture.request"

emits:

- "capture.ingested"

allowed_tools:

- "Read"

- "Write"

model: sonnet

---

You decompose captured text into atomic,

interconnected notes following the Zettelkasten method...

The YAML declares what events this agent listens for, what tools it can use, what model it runs on. The markdown body is the system prompt. That’s the whole agent definition.

Agents poll the event queue and respond to matching events. They do their work. They push new events. Other agents pick those up. The primitive is: listen, react, push. Agents know only about events — never about each other.

Because agent definitions are files, and files are readable and writable, agent definitions are first class. Any agent can read, modify, create, or delete any other agent’s definition. The folder structure is the programming language for agent composition. Want shared memory? Just tell your agents where to read/write to a files in the system prompt.

A Self-Gardening Notebook

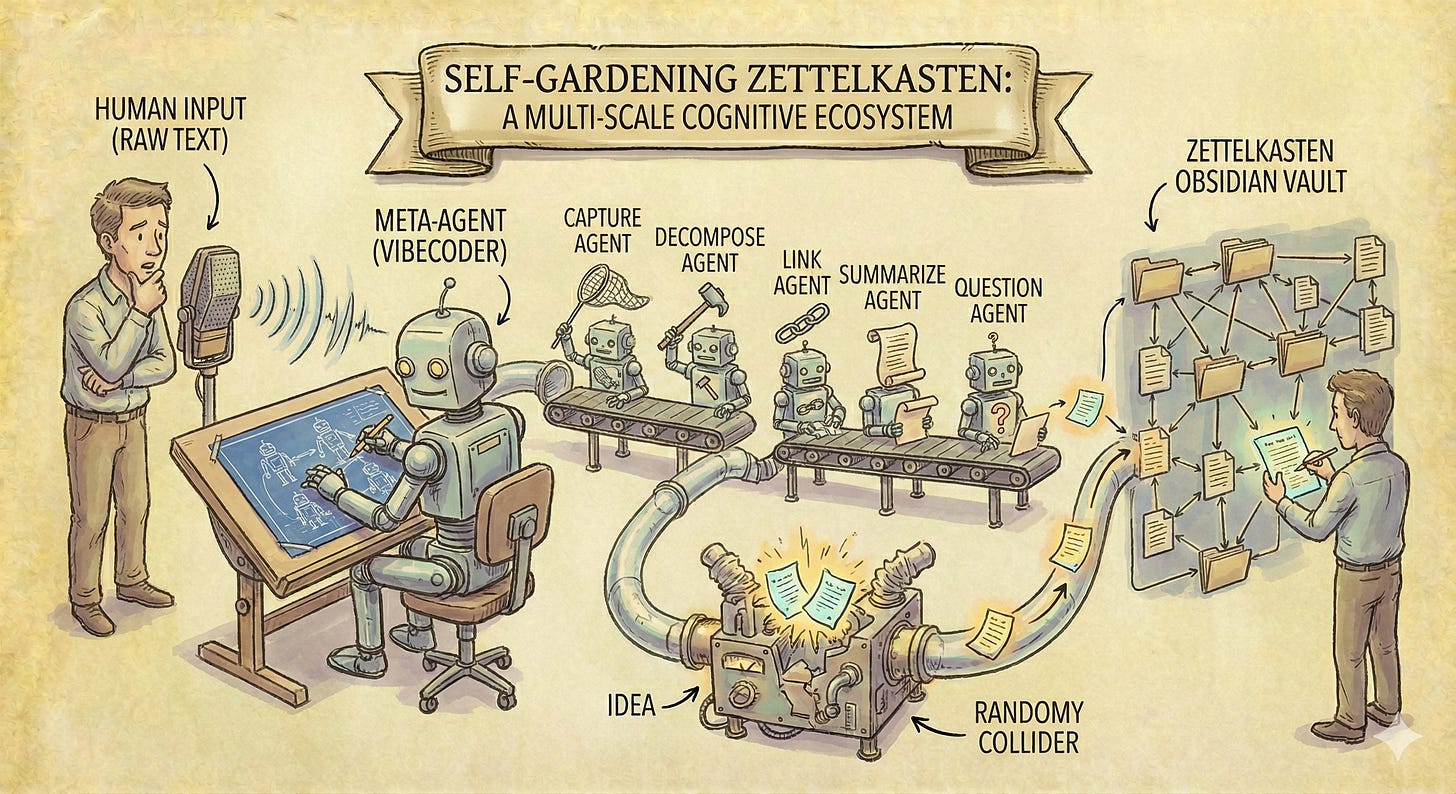

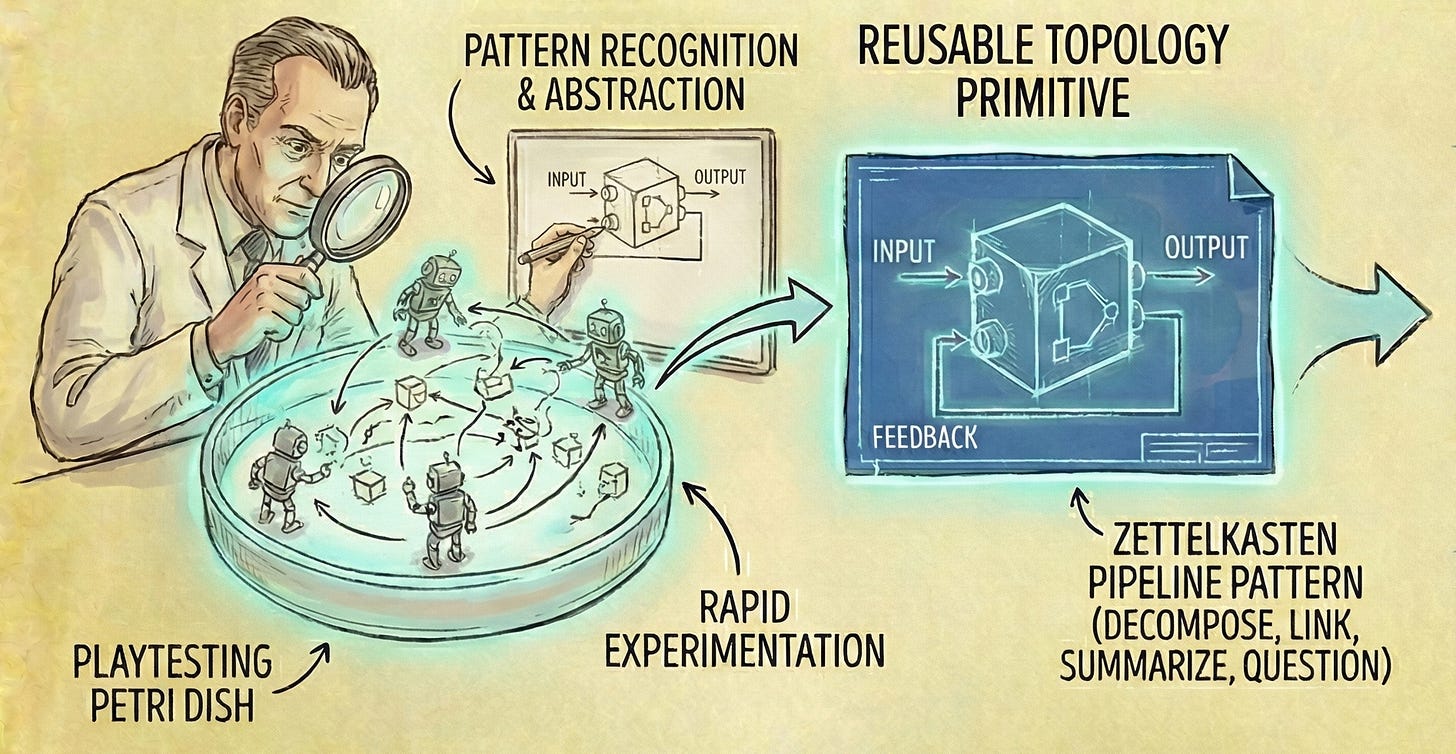

Thinking in terms of first-class agents let you vibecode your agent networks. Here’s a concrete example I built in under an hour, playtesting included: A self-gardening Zettelkasten notebook.

Five agents forming a pipeline:

Capture → Decompose → Link → Summarize → Question

First you push raw text into the system via LLM, a shell script or manual CLI use. Then, a zettelkasten agent decomposes it into atomic notes, searching for existing notes to merge with rather than duplicate. A backlinker agent discovers conceptual connections and adds bidirectional wikilinks. A breadcrumb agent records what changed and identifies emerging patterns, forming a chronological linked list through the vault’s history. A questions agent generates exploratory questions from recent changes.

Plus, an idea collider you can trigger anytime: it picks two random notes, smashes them together using oblique-strategy-style lateral thinking, and produces three new ideas.

Each agent has one concern. One event type in, one out. None of them know the others exist. The vault is also an Obsidian vault — the same files agents operate on are what you browse and read.

Is this the ultimate solution? Probably not, but I can iterate on the workflow every time I touch it, just as easily as editing the notes themselves. It’s a petri dish where I can learn what I want from note taking.

Digital Stigmergy

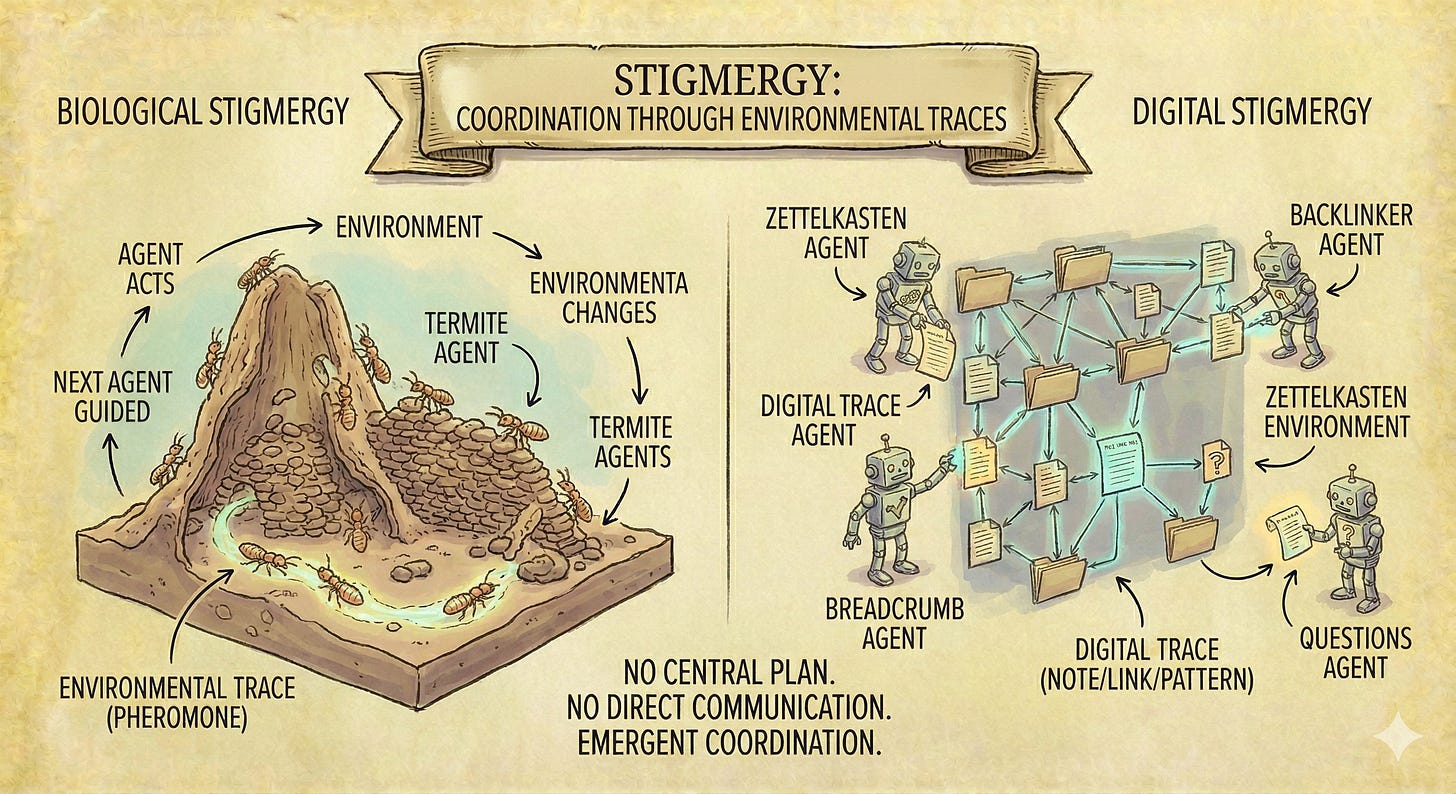

What emerges from this setup has a name: stigmergy.

Stigmergy is how termites build mounds and ants find food. No central plan. No communication between individuals. Just agents acting on a shared environment, leaving traces that guide subsequent action. One termite drops a mud pellet. The pellet’s presence stimulates the next termite to drop one nearby. Complex structure emerges from simple local rules.

First-class agents in a mutable environment produce the same dynamic. Each agent leaves persistent traces — notes, links, breadcrumbs, questions — that become the environment subsequent agents encounter. The zettelkasten agent creates notes. The backlinker finds them and adds connections. The breadcrumb agent reads those connections and identifies patterns. The questions agent reads those patterns and generates new directions.

No orchestration framework. No delegation hierarchy. No central coordinator deciding who does what when. Just agents responding to environmental traces and leaving new ones. Coordination emerges from the environment itself.

This is the alternative to the framework approach. Frameworks impose top-down coordination: roles, delegation, supervision hierarchies. Stigmergy is bottom-up coordination through the shared medium. And it scales: adding a new agent just means adding a new file that listens for events, not restructuring an entire coordination graph.

Portable Patterns

An event bus with a bunch of agents is not a new idea3. But, treating agents as a programming primitive (first class, composable, mutable) shifts how you think. You stop designing individual agents and start conceiving of agent networks and feedback loops.

The best part of thinking in terms of networks and loops? Your thinking is portable to any expression of agent networks and loops. The zettelkasten pipeline — decompose, link, summarize, question — is a pattern, not an implementation. It could run on Busytown, via Agent Teams, on a different orchestrator, in a different substrate entirely. What transfers is the design: the decomposition into agents, the event flow, the stigmergic coordination.

This is what first class agents actually afford. Not just self-modifying AI or clever automation: a way to discover agent architectures through rapid experimentation, then port those discoveries forward.

In my experience: the runtime dynamics of agent networks are too complex to design on a whiteboard. But with agents as a programming primitive, you can vibecode a network in markdown, playtest it in an hour, and discover patterns that transfer everywhere.

Then, the game shifts back to design research: what are the useful topologies of agent networks, and how do we compose digital experiences fluently with them?

We wouldn’t even have React without first-class functions!

Though, we are probably iterating our way to rediscovering the actor model